The term “ubiquitous computing” was coined in 1988 by Mark Weiser, a scientist at the Xerox laboratories in Palo Alto (the birthplace of numerous digital technologies), in an article titled The Computer for the 21st Century. Weiser opens with the observation that “The most profound technologies are those that disappear. They weave themselves into the fabric of everyday life until they are indistinguishable from it.”

Ten years have passed since the dawn of the 21st century, and ubiquitous computing is, at last, slowly becoming a reality.

Lev Manovich wrote about the computerisation of media in The Language of New Media. Yet the process of computerisation applies to the entire world. The number-crunching power of computers affects every part of our lives, transforming different processes and phenomena into streams of computer data. (We have already discussed the influence of digital media on other media and the overall consequences of digitisation). And while the effects of computerisation can be radically different, depending on the particular case in question, this influence is growing increasingly ubiquitous.

We can imagine computerisation as reality being affected by a single, enormous source of power; Alexander Galloway writes that opposing computerisation is like opposing gravity. It is better, however, to think of computerisation as a dispersed force: a contemporary, digital version of the ancient concept of pneuma.

Computerisation is paradoxical in that the key technological agent of our times — as predicted by Weiser — disappears in the very process. On the one hand, computing power is now incorporated into myriad gadgets. On the other, that same power is shifting from personal computers to the “cloud” — a currently fashionable term used to describe the network of servers onto which our applications and data are increasingly being offloaded. We used to keep our e-mails on our own computers; now Gmail stores them for us on the “internet cloud”, distributing them amongst hundreds of servers. Each individual server is a computer just like any other. Networked together in a manner that remains transparent to the end user, they become an abstract yet individualised “computing cloud” that we interact with when we check our messages.

At the same time, the computer’s functions are becoming increasingly blurred, quickly making the very word computer — a machine that processes numerical data — a complete misnomer. We’ve already written about the remediating role of computers, a quality that inspired Alan Kay, creator of the first personal computer, to describe them as “metamedia”. Bruce Sterling, a writer and design theoretician who is likely an entire century ahead of his time, takes this claim one step further, stating that the computer is best explained as “something completely new”. In his book Shaping Things, Sterling describes computers as digital gizmos, “highly unstable, user-alterable, baroquely multifeatured objects, commonly programmable and generally of a brief life-span”.

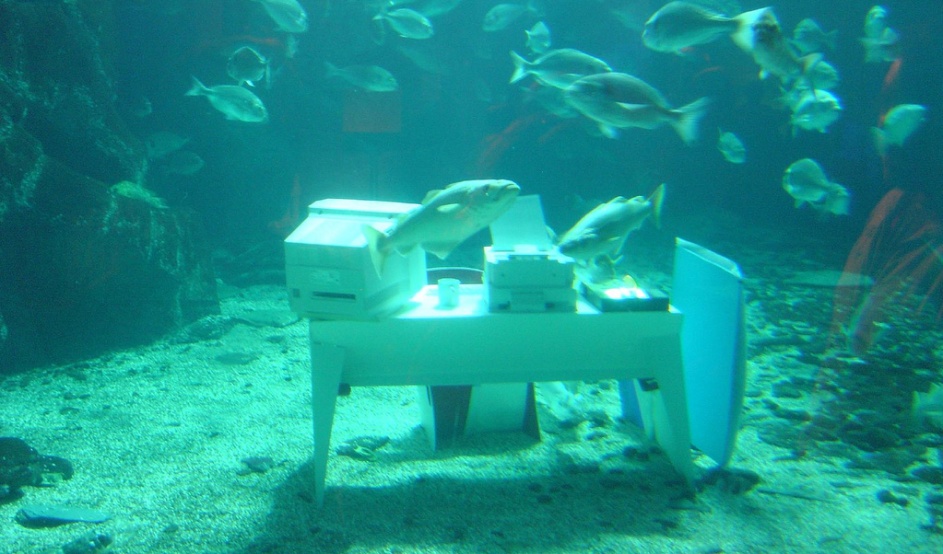

According to Sterling, gizmos are exceptional among other objects in that they can communicate with each other when they are plugged into a network. But this state of affairs is about to change, if we are to believe the vision known as the “internet of things”, a refreshed take on the two decades-old idea of ubiquitous computing. The concept of an “internet of things” predicts that even the most mundane objects will contain computer processors. This will enable these objects to collect and transmit data as well as communicate with the computerized “internet of things”.

The “internet of things”, in its nascent form, is already being implemented: the European Commission has already taken the first steps towards regulating it. Logistics providers attach digital ID tags to packages, enabling their precise tracking and management. It is difficult to understand the changes that the computerization of physical objects will bring ahead of time. We can, however, assume that the phenomena that are characteristic of 2.0 culture will soon apply to more than just symbolic content and isolated “virtual reality”.

The latter term, likely the main techno-fantasy of the 1990s, shows just how wrong we were to imagine cyberspace as an area that would be completely separate from reality. Ubiquitous computing seems to have done away with that vision once and for all, offering an augmented — rather than virtual — space that fuses the physical and digital worlds. These spaces have always been intertwined: the history of media is largely the history of cities, and urban streets have always been channels through which data flowed (originally in the form of money, then electricity and radio waves, and finally fiber-optics and wifi). The difference is that we now carry in our pockets devices that can see and understand parts of “traffic” that remain outside the reach of our own perception. The growth of geolocation technology has made some data accessible only from the right place in space (we mentioned this in the previous installment, when we talked about Yellow Arrows and QR codes posted in urban spaces; other examples include applications like BionicEye).

Perhaps sci-fi films aren’t the best metaphor for the world of computer networks: in Disney cartoons, everyday objects conduct animated discussions with each other. The internet has become widely-available, even commonplace. Its ubiquity is not just convenient, it’s taken for granted and treated like just another utility, alongside water and electricity. We use the internet without giving it a second thought, and it isn’t until our connection goes down that we realise just how deeply ingrained the service is in our lives. Trips to remote places outside the range of communications networks and ubiquitous computing are now treated as tempting adventures, not unlike stays at agro-tourism destinations, where water needs to be fetched from the well. But what if the well turns out to be a computer?

translated by Arthur Barys